Authors:

(1) Omri Avrahami, Google Research and The Hebrew University of Jerusalem;

(2) Amir Hertz, Google Research;

(3) Yael Vinker, Google Research and Tel Aviv University;

(4) Moab Arar, Google Research and Tel Aviv University;

(5) Shlomi Fruchter, Google Research;

(6) Ohad Fried, Reichman University;

(7) Daniel Cohen-Or, Google Research and Tel Aviv University;

(8) Dani Lischinski, Google Research and The Hebrew University of Jerusalem.

Table of Links

- Abstract and Introduction

- Related Work

- Method

- Experiments

- Limitations and Conclusions

- A. Additional Experiments

- B. Implementation Details

- C. Societal Impact

- References

A. Additional Experiments

Below, we provide additional experiments that were omitted from the main paper. In Appendix A.1 we provide additional comparisons and results of our method, and demonstrate its non-deterministic nature in Appendix A.2. In Appendix A.3 we compare our method against two na¨ıve baselines. Appendix A.4 presents the results of our method using different feature extractors. Lastly, in Appendix A.6 we provide results that reduce the concerns of dataset memorization by our method.

A.1. Additional Comparisons and Results

In Figure 9 we provide a qualitative comparison on the automatically generated prompts, and in Figure 10 we provide an additional qualitative comparison.

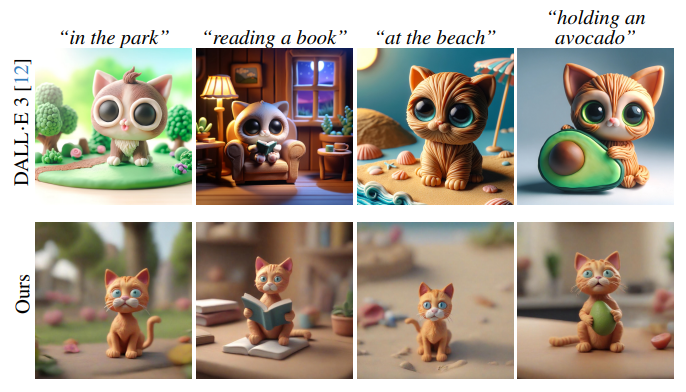

Concurrently to our work, the DALL·E 3 model [12] was commercially released as part of the paid ChatGPT Plus [53] subscription, enabling generating images in a conversational setting. We tried, using a conversation, to create a consistent character of a Plasticine cat, as demonstrated in Figure 11. As can be seen, the generated characters share only some of the characteristics (e.g., big eyes) but not all of them (e.g., colors, textures and shapes).

In Figure 12 we provide a qualitative comparison of the ablated cases. In addition, as demonstrated in Figure 13, our approach is applicable to consistent generation of a wide range of subjects, without the requirement for them to necessarily depict human characters or creatures. Figure 14 shows additional results of our method, demonstrating a variety of character styles. Lastly, in Figure 15 we demonstrate the ability of creating a fully consistent “life story” of a character using our method.

A.2. Non-determinism of Our Method

In Figures 16 and 17 we demonstrate the non-deterministic nature of our method. Using the same text prompt, we run our method multiple times with different initial seeds, thereby generating a different set of images for the identity clustering stage (Section 3.1). Consequently, the most cohesive cluster ccohesive is different in each run, yielding different consistent identities. This behavior of our method is aligned with the one-to-many nature of our task — a single text prompt may correspond to many identities.

A.3. Naıve Baselines

As explained in Section 4.1, we compared our method against a version of TI [20] and LoRA DB [71] that were trained on a single image (with a single identity). Instead, we could generate a small set of five images for the given prompt (that are not guaranteed to be of the same identity), and use this small dataset for TI and LoRA DB baselines, referred to as TI multi and LoRA DB multi, respectively. As can be seen in Figures 18 and 19, these baselines fail to achieve satisfactory identity consistency.

A.4. Additional Feature Extractors

Instead of using DINOv2 [54] features for the identity clustering stage (Section 3.1), we also experimented with two alternative feature extractors: DINOv1 [14] and CLIP [61] image encoder. We quantitatively evaluate our method with each of these feature extractors in terms of identity consistency and prompt similarity, as explained in Section 4.1. As can be seen in Figure 20, DINOv1 produces higher identity consistency, while sacrificing prompt similarity, whereas CLIP achieves higher prompt similarity at the expense of identity consistency. Qualitatively, as demonstrated in Figure 21, we found the DINOv1 extractor to perform similarly to DINOv2, whereas CLIP produces results with a slightly lower identity consistency.

A.5. Additional Clustering Visualization

In Figure 22 we provide a visualization of the clustering algorithm described in Section 3.1. As can be seen, given the input text prompt “a purple astronaut, digital art, smooth, sharp focus, vector art”, in the first iteration (top three rows), our algorithm divides the generated image set into three clusters: (1) focusing on the astronaut’s head, (2) an astronaut with no face, and (3) a full body astronaut. In the second iteration (bottom three rows), all the clusters share the same identity, that was extracted in the first iteration, as described in Section 3.2, and our algorithm divides them into clusters by their pose.

A.6. Dataset Non-Memorization

Our method is able to produce consistent characters, which raises the question of whether these characters already exist in the training data of the generative model. We employed SDXL [57] as our text-to-image model, whose training dataset is, unfortunately, undisclosed in the paper [57]. Consequently, we relied on the most likely overlapping dataset, LAION-5B [73], which was also utilized by Stable Diffusion V2.

To probe for dataset memorization, we found the top 5 nearest neighbors in the dataset in terms of CLIP [61] image similarity, for a few representative characters from our paper, using an open-source solution [68]. As demonstrated in Figure 23, our method does not simply memorize images from the LAION-5B dataset.

![Figure 9. Qualitative comparison to baselines on the automatically generated prompts. We compared our method against several baselines: TI [20], BLIP-diffusion [42] and IP-adapter [93] are able to correspond to the target prompt but fail to produce consistent results. LoRA DB [71] is able to achieve consistency, but it does not always follow to the prompt, in addition, the generate character is being generated in the same fixed pose. ELITE [90] struggles with following the prompt and also tends to generate deformed characters. Our method is able to follow the prompt, and generate consistent characters in different poses and viewing angles.](https://cdn.hackernoon.com/images/fWZa4tUiBGemnqQfBGgCPf9594N2-9b83zvk.png)

![Figure 10. Additional qualitative comparisons to baselines. We compared our method against several baselines: TI [20], BLIP-diffusion [42] and IP-adapter [93] are able to correspond to the target prompt but fail to produce consistent results. LoRA DB [71] is able to achieve consistency, but it does not always follow to the prompt, in addition, the generate character is being generated in the same fixed pose. ELITE [90] struggles with following the prompt and also tends to generate deformed characters. On the other hand, our method is able to follow the prompt, and generate consistent characters in different poses and viewing angles.](https://cdn.hackernoon.com/images/fWZa4tUiBGemnqQfBGgCPf9594N2-6w93zs4.png)

A.7. Stable Diffusion 2 Results

We experimented with a version of our method that uses the Stable Diffusion 2 [69] model. The implementation is the same as explained in Appendix B.1, with the following changes: (1) The set of custom text embeddings τ in the character representation Θ (as explained in Section 2 in the

main paper ), contains only one text embedding. (2) We used a higher learning rate of 5e-4. The rest of the implementation details are the same. More specifically, we used Stable Diffusion v2.1 implementation from Diffusers [86] library.

As can be seen in Figure 24, when using the Stable Diffusion 2 backbone, our method can extract a consistent character, however, as expected, the results are of a lower quality than when using the SDXL [57] backbone that we use in the rest of this paper.

This paper is available on arxiv under CC BY-NC-ND 4.0 DEED license.