Authors:

(1) Xiao-Yang Liu, Hongyang Yang, Columbia University (xl2427,hy2500@columbia.edu);

(2) Jiechao Gao, University of Virginia (jg5ycn@virginia.edu);

(3) Christina Dan Wang (Corresponding Author), New York University Shanghai (christina.wang@nyu.edu).

Table of Links

2 Related Works and 2.1 Deep Reinforcement Learning Algorithms

2.2 Deep Reinforcement Learning Libraries and 2.3 Deep Reinforcement Learning in Finance

3 The Proposed FinRL Framework and 3.1 Overview of FinRL Framework

3.5 Training-Testing-Trading Pipeline

4 Hands-on Tutorials and Benchmark Performance and 4.1 Backtesting Module

4.2 Baseline Strategies and Trading Metrics

4.5 Use Case II: Portfolio Allocation and 4.6 Use Case III: Cryptocurrencies Trading

5 Ecosystem of FinRL and Conclusions, and References

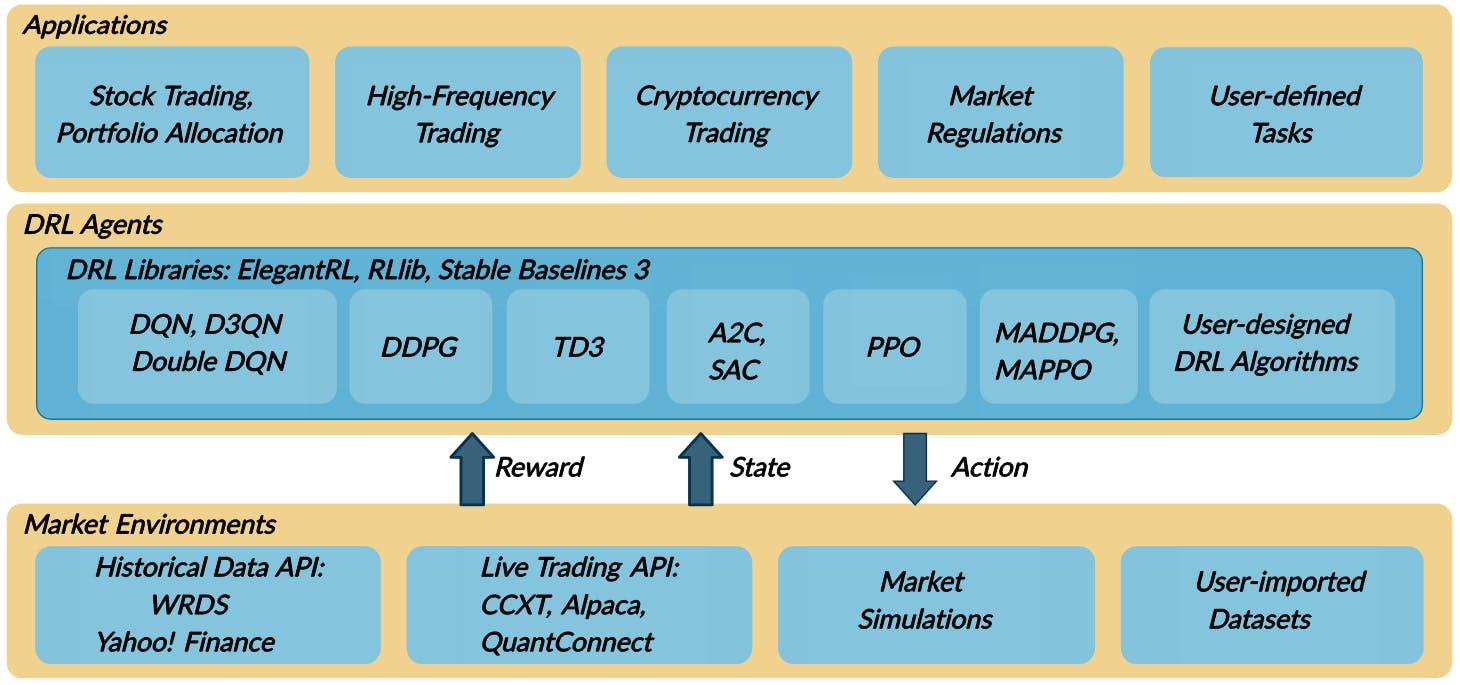

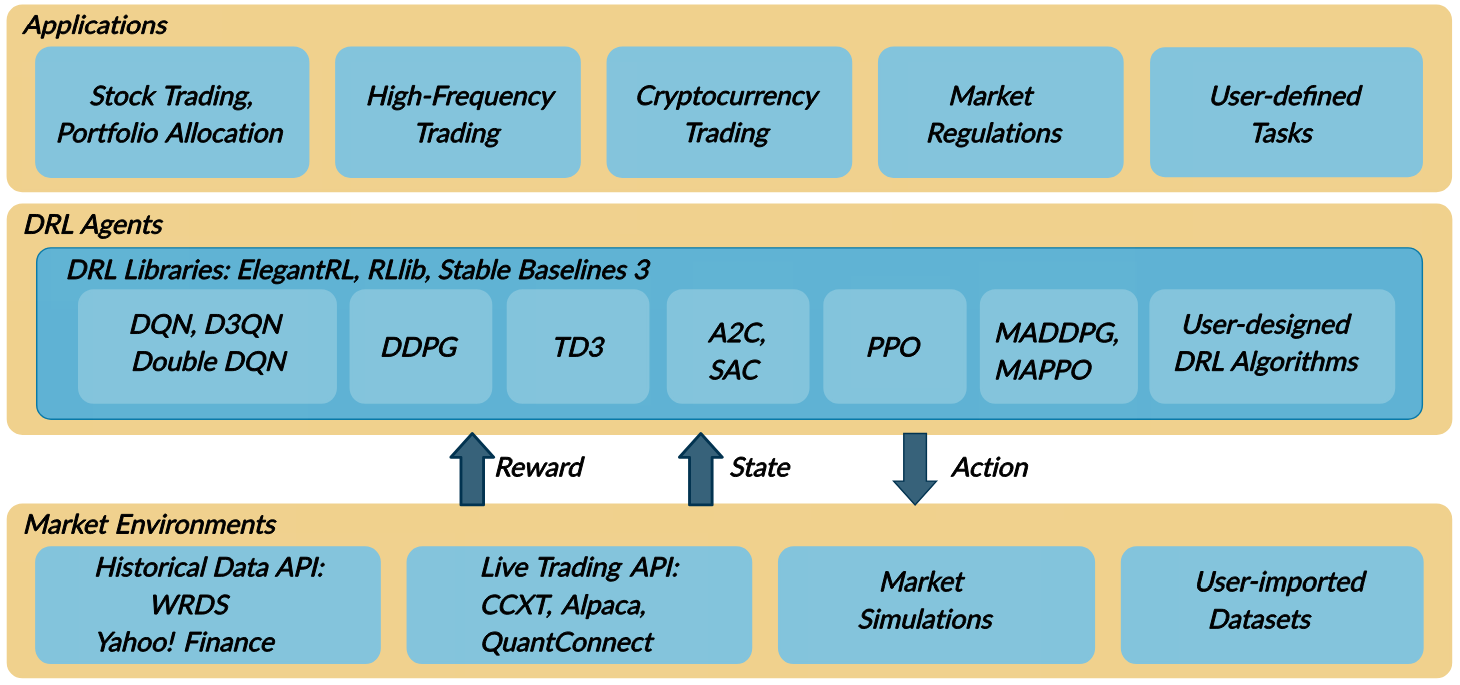

2.2 Deep Reinforcement Learning Libraries

We summarize relevant open-source DRL libraries as follows:

OpenAI Gym [5] provides standardized environments for various DRL tasks. OpenAI baselines [10] implements common DRL algorithms, while stable baselines 3 [37] improves [10] with code cleanup and user-friendly examples.

Google Dopamine [7] aims for fast prototyping of DRL algorithms. It features good plugability and reusability.

RLlib [25] provides highly scalable DRL algorithms. It has modular framework and is well maintained.

TensorLayer [11] is designed for researchers to customize neural networks for various applications. TensorLayer is a wrapper of TensorFlow and supports the OpenAI gym-style environments. However, it is not user-friendly

2.3 Deep Reinforcement Learning in Finance

Many recent works have applied DRL to quantitative finance. Stock trading is considered as the most challenging task due to its noisy and volatile features, and various DRL based approaches [15, 35, 54] have been applied. Volatility scaling was incorporated in DRL algorithms to trade futures contracts, which considered market volatility

in a reward function. News headline sentiments and knowledge graphs, as alternative data, can be combined with the price-volume data as time series to train a DRL trading agent. High frequency trading using DRL [38] is a hot topic.

Deep Hedging [6] designed hedging strategies with DRL algorithms that manages the risk of liquid derivatives. It has shown two advantages of DRL in mathematical finance, scalable and model-free. DRL driven strategy would become more efficient as the scale of the portfolio grows. It uses DRL to manage the risk of liquid derivatives, which indicates further extension of our FinRL library into other asset classes and topics in mathematical finance.

Cryptocurrencies are rising in the digital financial market, such as Bitcoin (BTC) [40], and are considered more volatile than stocks. DRL is also being actively explored in automated trading, portfolio allocation, and market making for cryptocurrencies [20, 39, 41].

This paper is available on arxiv under CC BY 4.0 DEED license.